Hi everyone-

The clocks have changed – officially the end of ‘daylight savings’ in the UK – does that mean we no longer try and save daylight? Certainly feels that way … definitely time for some satisfying data science reading materials while drying out from the rain!

Following is the November edition of our Royal Statistical Society Data Science and AI Section newsletter. Hopefully some interesting topics and titbits to feed your data science curiosity.

As always- any and all feedback most welcome! If you like these, do please send on to your friends- we are looking to build a strong community of data science practitioners. And if you are not signed up to receive these automatically you can do so here.

Industrial Strength Data Science November 2021 Newsletter

RSS Data Science Section

Committee Activities

We are all conscious that times are incredibly hard for many people and are keen to help however we can- if there is anything we can do to help those who have been laid-off (networking and introductions help, advice on development etc.) don’t hesitate to drop us a line.

We are pleased to announce our next virtual DSS meetup event, on Tuesday 23rd November at 5pm: “The National AI Strategy – boom or bust to your career in data science?”. Following on from our commentary on the UK Government’s AI Strategy (based on the excellent feedback from our community), and the pick-up we have received, we are going to run a focused event discussing this topic. You will hear key information about the strategy and have the opportunity to ask questions, provide input, and hear a panel of experts discuss the implications of the strategy for practitioners of AI in the UK. Save the date- all welcome!

Of course, the RSS never sleeps… so preparation for next year’s conference, which will take place in Aberdeen, Scotland from 12-15 September 2022, is already underway. The RSS is inviting proposals for invited topic sessions. These are put together by an individual, group of individuals or an organisation with a set of speakers who they invite to speak on a particular topic. The conference provides one of the best opportunities in the UK for anyone interested in statistics and data science to come together to share knowledge and network. Deadline for proposals is November 18th.

Martin Goodson continues to run the excellent London Machine Learning meetup and is very active in with events. The last talk was on October 27th where Anees Kazi, senior research scientist at the chair of Computer Aided Medical Procedure and Augmented Reality (CAMPAR) at Technical University of Munich, discussed “Graph Convolutional Networks for Disease Prediction“. Videos are posted on the meetup youtube channel – and future events will be posted here.

This Month in Data Science

Lots of exciting data science going on, as always!

Ethics and more ethics…

Bias, ethics and diversity continue to be hot topics in data science…

- Before we delve into our regular treasure trove of algorithmic misadventure, it’s worth remembering you don’t need sophisticated machine learning models to spread confusion and miss-information with numbers. MPs and civil servants in the UK have been called to account for misleading use of statistics in a far reaching review from the UK Statistics Authority.

- Amazon has unvelied it’s household robot, Astro, filled with impressive functionality and smarts…. Unfortunately, it has been almost immediately dubbed a ‘spybot’ after leaked documents highlighted just how much personal information it tracks.

- The BBC digs into the controversial US gun detection firm, ShotSpotter- a great example of attempting to solve a valuable problem, but where the costs are asymmetric and so implementation and oversight are critical.

- Exceedingly disappointing that the “racist visa algorithm” ever got implemented at the UK Home Office, but heartening to see that it has at least been withdrawn after judicial review, with Foxglove leading the charge.

“As far as we can tell, the algorithm is using problematic and biased criteria, like nationality, to choose which “stream” you get in. People from rich white countries get “Speedy Boarding”; poorer people of colour get pushed to the back of the queue.”- Facebook (or should we say ‘Meta’) has been in the news again for all the wrong reasons.. A former Facebook product manager, Frances Haugen, has testified in US congress on a wide range of topics, calling into question the company’s ethics as well as number of their reported metrics on the removal of miss-information. Previous analysis of their automated take down process highlights how easy it us to circumvent the system even if you believe the numbers reported.

"Facebook has been unwilling to accept even little slivers of profit being sacrificed for safety"- New research into the immense data sets used to train the large image recognition, language and ‘multi-modal’ deep learning models highlights just how careful we need to be in data curation and cleaning

- Separately, DeepMind details how hard it is to clean-up or ‘detoxify’ these large models.

- It’s not all doom and gloom though- there are positive applications of some of these AI driven tools, as this article points out, with at risk populations able to disguise their voices and gain protection via deep-fake approaches.

- One thing is for sure: ethical utilisation of AI is a complex issue that is not going away any time soon – Wired calls for a ‘Bill of Rights for an AI-Powered World‘

"In a competitive marketplace, it may seem easier to cut corners. But it’s unacceptable to create AI systems that will harm many people, just as it’s unacceptable to create pharmaceuticals and other products—whether cars, children’s toys, or medical devices—that will harm many people."Developments in Data Science…

As always, lots of new developments…

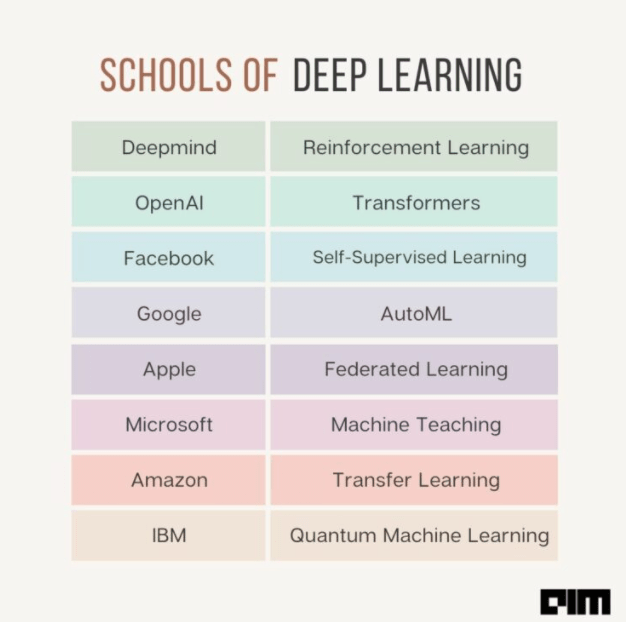

- Before delving into the research, it’s sometimes useful to step back and observe the lie of the land. Interesting perspective here on how the major players have ended up focusing in slightly different areas of deep learning research

- It’s certainly true that Facebook have made great strides in building out practical self-supervising techniques, while DeepMind are making great progress in Reinforcement Learning.

- Interesting research into the limits of large scale pre-training, uncovering examples where “to have a better downstream performance, we need to hurt upstream accuracy”

- Useful take on metrics for multi-task learning, applicable to a wide variety of Machine learning techniques

"It is important to not only look at average task accuracy -- which may be biased by easy or redundant tasks -- but also worst-case accuracy (i.e. the performance on the task with the lowest accuracy)."- Explainability and interpretability of large models continues to be a hot topic in research. This novel approach from the Google research team leverages GANs to explain the given classifier- very cool!

- Something we’ve been talking about recently: data trumps algos in many places…

"Classification, extractive question answering, and multiple choice tasks benefit so much from additional examples that collecting a few hundred examples is often "worth" billions of parameters"- The extent to which you can use synthetic data in machine learning always generates discussion. Microsoft Research highlights you can go far with facial analysis, with the potential benefits of improving diversity in data sets.

- The annual ‘State of AI’ report is always a weighty tome – this years’ comes in at 188 slides… Worth a skim to see what people are working on, but perhaps be wary of the predictions…

- This is very relevant – ‘editing’ models. We have talked about how some of the large data sets used to train the leading image and language models have questionable data quality. Is there a way of removing the influence of particular erroneous data points from the final model when they are identified? Researchers at Stanford University think so

"MEND can be trained on a single GPU in less than a day even for 10 billion+ parameter models; once trained MEND enables rapid application of new edits to the pre-trained model. Our experiments with T5, GPT, BERT, and BART models show that MEND is the only approach to model editing that produces effective edits for models with tens of millions to over 10 billion parameters"- This is an intriguing idea – training a Deep Learning model to predict the parameter values of a Deep Learning model... why you might ask? Because you can generate a final model in a single pass…

"By leveraging advances in graph neural networks, we propose a hypernetwork that can predict performant parameters in a single forward pass taking a fraction of a second, even on a CPU. The proposed model achieves surprisingly good performance on unseen and diverse networks"- I don’t pretent to understand all this, but I love the title… “FlingBot: The Unreasonable Effectiveness of Dynamic Manipulations for Cloth Unfolding“

- Whether or not Deep Learning techniques are useful for traditional tabular data can often be a point of contention – is the additional complexity really worth it? Useful research survey here.

- Finally, good to learn that it’s not just all about scale (more data, more compute)- our algorithms are getting better, at least according to research from MIT

Real world applications of Data Science

Lots of practical examples making a difference in the real world this month!

- Google has announced it plans to include multi-modal models in its search algorithms- learning from the linkages between text and images- good commentary here

“It holds out the promise that we can ask very complex queries and break them down into a set of simpler components, where you can get results for the different, simpler queries and then stitch them together to understand what you really want.”- Not sure I fully understand this to be honest, but differentiable biology definitely sounds exciting…

- Medical image classification continues to be an excellent application of machine learning, and google research have released some impressive work-

- Firstly a new self-supervising approach to improve classification accuracy

- And then exploring deep learning techniques focused on the long tail of outliers

- Great progress leveraging genome sequencing for diagnosing rare genetic diseases

- For those who like sports and data science, the ever evolving sphere of ‘sports analytics’ is a match made in heaven… fun commentary postulating we are now in the era of Moneyball 3.0!

- Not sure if this is the best use of AI, but certainly creative… completing Beethovens’ unfinished 10th Symphony…with some critical evaluation from a musical standpoint here

- Commentary from Wired (here and here) on the increasing costs of training models, and how this potentially puts the most advanced approaches out of reach of all but a very select few companies. All is not lost though – we have previously discussed many approaches to pruning and simplifying models without significant losses in accuracy, and in addition transfer learning from open source pre-trained models is now widely available. And some useful practical tips here on how to train big models cheaply..

- Perhaps we’ll all end up back in spreadsheets…. AI can even help us with that though! Amazing that a language based model architecture can be used to understand and predict spreadsheet formulae…

"To compute the embedding of the tabular context, it first uses a BERT-based architecture to encode several rows above and below the target cell (together with the header row). The content in each cell includes its data type (such as numeric, string, etc.) and its value, and the cell contents present in the same row are concatenated together into a token sequence to be embedded using the BERT encoder”How does that work?

A new section on understanding different approaches and techniques

- Why do neural networks generalise so well? Good question… let the BAIR help you out (well worth a read – note you may need to reload the page as it doesnt seem to take in-bound links)

"Perhaps the greatest of these mysteries has been the question of generalization: why do the functions learned by neural networks generalize so well to unseen data? From the perspective of classical ML, neural nets’ high performance is a surprise given that they are so overparameterized that they could easily represent countless poorly-generalizing functions."- Useful tutorial on approaches to cleaning up data– very practical

Practical tips

How to drive analytics and ML into production

- Does what it says on the tin… “ETL Pipelines with Airflow: the Good, the Bad and the Ugly”

- Useful “Data Exchange” podcast on MLOps and best practices for deploying ML into production

- Talk about practical… “How I got a job at DeepMind as a research engineer (without a machine learning degree!)”….

"Nobody cared that I speak 5 languages, that I know a bunch about how microcontrollers work in the tiniest of details, how an analog high-frequency circuit is built from bare metal, and how computers actually work. All of that is abstracted away. You only need…algorithms & data structures pretty much.”Bigger picture ideas

Longer thought provoking reads – a few more than normal, lean back and pour a drink!

- Linking modern deep learning architectures back to more basic building blocks to better understand how they work

"A number of researchers are showing that idealized versions of these powerful networks are mathematically equivalent to older, simpler machine learning models called kernel machines. If this equivalence can be extended beyond idealized neural networks, it may explain how practical ANNs achieve their astonishing results."- Is General AI striving for the wrong thing? Is generalisation more important that “consciousness”?

- Machine learning is not nonparametrics statistics with a retort…

- Less AI related and more philosophical around the scale of information available and what that means for attention… ‘stepping out of the firehose’, well worth a read from Benedict Evans.

"I wrote earlier this year about Morioka Shoten, a bookshop in Tokyo that only sells one book, and you could see this as an extreme reaction to a problem of infinite choice. Of course, like all these solutions it really only relocates the problem, because now you have to know about the shop instead of having to know about the book"Practical Projects and Learning Opportunities

As always here are a few potential practical projects to keep you busy:

- How about implementing your own prompt-learning setup

- Some fun AI/Art/Culture explorations

"Transcribing Japanese cursive writing found in historical literary works like this one is usually an arduous task even for experienced researchers. So we tested a machine learning model called KuroNet to transcribe these historical scripts."- Feeling like a bigger challenge? How about the Alexa Prize Simbot Challenge from Amazon

"A competition focused on helping advance development of next-generation virtual assistants that will assist humans in completing real-world tasks by harnessing generalizable AI methodologies such as continuous learning, teachable AI, multimodal understanding, and reasoning"Covid Corner

Although life seems to be returning to normal for many people in the UK, there is still lots of uncertainty on the Covid front… booster vaccinations are now rolling out in the UK, which is good news, but we still have exceedingly high community covid case levels due to the Delta variant and rising hospitalisations…

- The latest ONS Coronavirus infection survey estimates the current prevalence of Covid in the community in England to be roughly 1 in 50 people as high as it has ever been – crazy to think that 2% of the UK population have Covid right now… Back in May the prevalence was less than 1 in 1000..

- The UK remains a significant outlier in terms of policy on vaccinating children against Covid, and recent decisions have been called out as actively propagating the concept of herd immunity and going against scientific evidence.

"From the viewpoint of some JCVI members, children aren’t independent agents with a right to be protected from a potentially dangerous virus. Rather, because they can serve as human shields for more vulnerable adults, it’s downright good when children get sick. They explicitly stated that “natural infection in children could have substantial long-term benefits for COVID-19 in the UK.” Not only is this scientific nonsense, as the high number of infections in the UK clearly shows, it’s a moral abomination"Updates from Members and Contributors

- Sorry we didnt do more publicity around PyData Global 2021 … it just happened last week. Many congrats to Kevin O’Brien one of the main organisers and to Marco Gorelli for his talk on Bayesian Ordered Logistic Regression!

- Ronald Richman has just published a new paper on explainable deep learning which looks very interesting.

- Sarah Phelps invites everyone to what looks to be an excellent webinar hosted by the UK ONS Data Science Campus:

- “The UK Office for National Statistics Data Science Campus and UNECE HLG-MOS invite you to join them for the ONS-UNECE Machine Learning Group 2021 Webinar on 19 November. “

- “The webinar will provide an opportunity to learn about the progress that the Group has made this year in its different work areas, from coding and classification and satellite imagery to operationalisation and data ethics. Bringing together colleagues from across the global official statistics community, it will include contributions from senior figures in the data science divisions of various NSOs as well as discussion on the priorities for advancing the use of machine learning in official statistics in 2022.”

Again, hope you found this useful. Please do send on to your friends- we are looking to build a strong community of data science practitioners- and sign up for future updates here.

– Piers

The views expressed are our own and do not necessarily represent those of the RSS